18 of the most used Lean Startup experiments (+examples)

Table of Contents

As a startup, you are searching for a business model that works. You do this through a process we call Lean innovation.

Validated learning is based on the concept that everything you think you know about your business model is rather just an assumption. The most critical assumptions (the once that are most risky) need to be validated or invalidated before you can turn your idea into a working and profitable business model.

These validations are done by running small experiments that give you enough proof to de-risk a part of your business model and turn assumptions into proven knowledge, helping you take a step forward.

If you learn enough about an assumption to understand that the assumption is not valid, you invalidate the assumption. To continue after an invalidation, you will have to change a part of your business model to adapt to the new situation. We call this a pivot.

There are many moving parts of a business model, so running just one experiment to validate the whole business model is impossible. You have to keep running small experiments to test aspects of your business model until you have validated your entire business model, one experiment at a time.

So how do you create those experiments?

Two types of experiments: Learn and Confirm experiments

First of all, there are two types of experiments you can run: Learn and Confirm.

Learn experiments, also called Research or Generative Experiments, are used to learn more about a certain topic. They create new assumptions.

Their counterpart is called Confirm experiments. With Confirm Experiments, you confirm whether an existing assumption is valid or invalid.

Experiments are successful when they create new learnings (in case of a Learn experiment), or when they validate or invalidate an existing assumption (with a Confirm experiment).

Yes, invalidating an assumption is also a success!

You have learned a valuable lesson when you understand what is not true. You only fail if you did not learn anything (Learn experiments) or the result of your Confirm experiment is inconclusive.

You either ran the wrong experiment, or the experiment wrong and you waisted valuable time and resources in doing so.

Stage Relevant Experiments

With every experiment that you run it is important to ask; what is the riskiest assumption to (in)validate or what do I need to learn right now to move forward.

Usually, you start by learning more about a certain subject and then run one or multiple Confirm experiments, to confirm that the assumptions are valid or not.

Helping hundreds of startups in the past 10 years, we identified four focus areas to help you understand what to focus on in each stage. We call these four stages: Problem, Solution, Revenue, and Scale, after the things you have to validate before moving on to the next stage.

- In the Problem stage, you focus mainly on the customer segment and their problems.

- In the Solution stage, you try to understand what your customer segment is trying to get done (the JTBD) and what solution would solve their problem or help them fulfill their JTBD.

- In the Revenue stage, you focus on the reasons to buy your solution (the Value Proposition) and how you can earn money, or make revenue.

- Last but not least, you focus on reaching your customers and the growth engine in the Scale stage.

Based on these stages and focus areas, we created the NEXT Canvas. To help you group your stage-relevant assumptions and always understand what to focus on next.

[convertkit form=2601912]

Although the theory is rather simple, it is quite hard to come up with a good experiment that would help you move foreard from a business idea to a working business model.

That is why it helps to understand at which stage you currently are. Each stage only has a limited set of experiments that makes sense to apply.

Since experiment design is hard, especially because most of us are entrepreneurs and not trained scientists, let's not talk too much about what good experiment design is, let’s dive into some concrete examples.

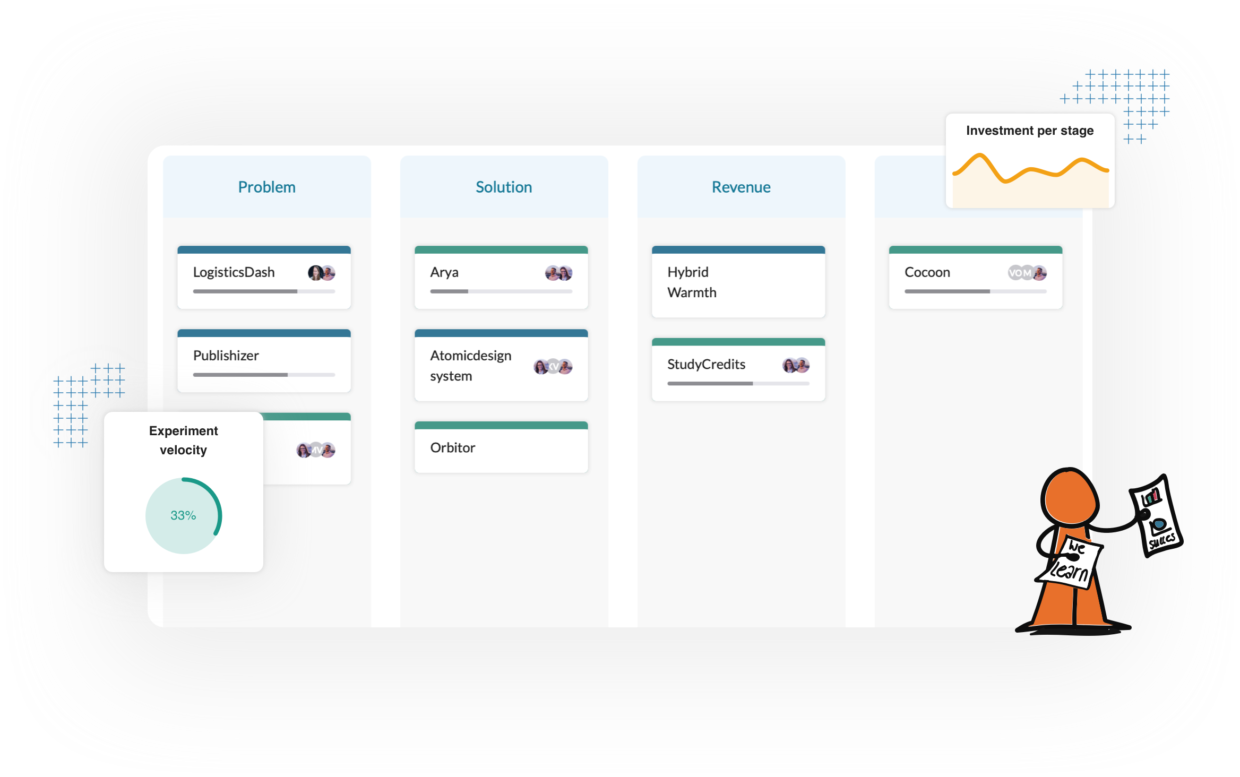

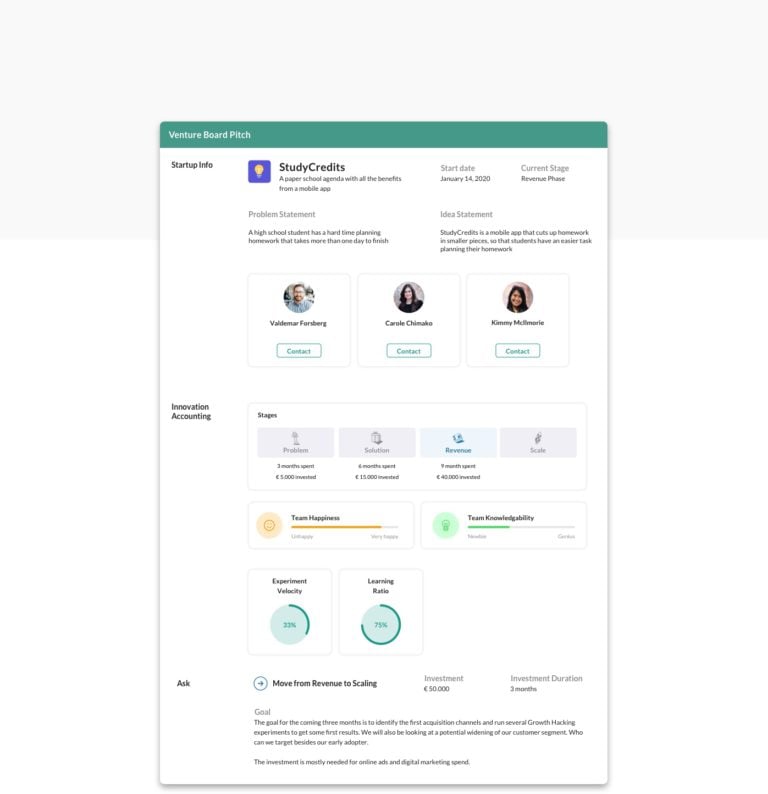

Test business ideas and run experiments with GroundControl

Everything we describe in this article, from how to run experiments to which experiments to run, is built into our software platform GroundControl.

GroundControl acts as a virtual coach and helps you to identify what is currently most risky and which experiments can help you de-risk.

When you have learned enough, GroundControl can generate a one-pager pitch deck automatically of all your progress, so you can easily present to your investor or internal stakeholders.

Learn more how to test business ideas with GroundControl.

[convertkit form=2570335]

Stage 1: Experiments to validate the problem

The main question every (corporate) startup needs to answer first is: Are you really solving a real problem for actual people?

We often tell founders that you want to target the customer segment with the biggest pain first, that is aware of their problem and is searching for a solution.

As an example, we tell the story that you want to find the person who fell off her bike (We are from Amsterdam) and has broken her arm. Her bone is sticking out and she is screaming from pain. She will do almost anything and pay almost anything to get her ‘problem’ solved.

You want to build a painkiller, not a vitamin. But how do you validate that?

The first and most important: Customer Interviews

Both Learn and Confirm.

The customer interview is almost always the best way to start. It is THE tool to learn more about certain topics and an easy way to validate assumptions. If we could only recommend one experiment, it would always be to get out of the building and talk to your customers. Your customers have the knowledge, you only have assumptions.

We conduct customer discovery interviews to get a general overview of who your customers are and what they are trying to get done. In particular, the insights from customer discovery interviews should indicate what problems your customers are experiencing.

We previously wrote about how to run useful customer interviews, including how to set them up and how to analyze them. To do a real deep dive into customer interviews, we recommend reading The Mom Test by Rob Fitzpatrick. We also translated this book into Dutch, which you can find here.

Customer interviews are used to answer

- Who is your customer?

- What are their biggest pains?

- What are their behaviors and patterns?

- How are they solving their problems now

Customer interviews explained

One of the essential tools or experiments you can do throughout the search for your working business model is conducting customer interviews. Whether you are finding out if there is a problem or want to know about what people are trying to do, the customer discovery interview is essential in understanding your customer’s behavior and needs.

Most startup founders tend to be scared of getting out of the building and talk to strangers. They compile surveys and questionnaires they can conduct online and send out from behind their computers. Easier, far more comfortable, but the outcome will be averages rather than patterns since you can only ask about things you already know and not uncover things that you don’t know.

Talking to real people, listening to the things they say, and expressing it will help you investigate and drill down to the why behind the what. Do you think they will not tell you? People love to complain about what is not working for them or tell you all about their passion. All you have to provide is a listening ear.

How to get started with customer interviews

Step 1: Plan the Interview

- Define the learning goal for the interviews.

- Define key assumptions about the customer segments.

- Create a topic map with topics and subtopics which you would like to learn more about regarding your customer (and their problems).

Make sure you can take notes effectively during the interview. Besides taking notes, you can also record the interview, but be aware that it takes a lot of time to listen back to a recording (so we don't recommend actively listening back to recordings).

Step 2: Conduct the Interview

- Frame: Summarize the purpose of the interview with the customer.

- Open: Warm-up questions get the customer comfortable talking.

- Listen: Let the customer talk and follow up with "what", "why" and "how" questions.

- Close: Wrap up the interview and ask for referrals, or if applicable, a follow-up interview.

As written above, questions that start with "what", "why" and "how" generally lead to better answers regarding your customer's behavior and the problem that they might be experiencing. When introducing new topics (or asking follow-up questions) use your topic map for reference, but be aware that new topics might arise that you didn't anticipate for.

Step 3: Do a debrief of the Interview

Make notes right away. Video and audio recordings should only be used as backup reference.

Step 4: The Interpretation of the results

Look back at your notes. Have you found answers for any of the following?

- Job: What activities are making the customer run into the problem?

- Obstacle: What is preventing the customer from solving their problem?

- Goal: What is your customer trying to accomplish?

- Current solution: How are they currently solving their problem?

- Decision trigger: Were there pivotal moments where the customer made key decisions about a problem?

- Interest trigger: Which questions did the customer express interest in?

- Persons: Are there any other people involved with the problem or solution?

- Emotions: Is there anything specific that causes the customer to express different emotions?

(Inspired by The Real Startup Book)

Desk Research

Learn experiment.

Desk research gathers and interprets available information from external sources. In other words, you are using search engines, libraries, or other sources of information to discover what others already learned and what is out there relating to your idea.

This method is used to give you a general overview of what competitors and alternatives already exist, how customer behavior looks like regarding the problem you are solving, and of technologies that can enrich your idea.

Desk research is used to answer

- What competitors of our idea operate in the market already?

- What alternative solutions exist for our idea?

- Where might you find your customers (and what is their behavior)?

- What pains might your customers experience regarding a problem (or existing product)?

- What technologies can impact your idea?

Desk research explained

This form of market research is considered to be secondary research, which is "simply the act of seeking out existing research and data." Secondary market research aims to use existing information to derive and improve your research strategy before any first-person research.

With existing markets, a tremendous amount of information can be found online or purchased from market research consultants.

This type of research can be performed for any market but is mostly used by companies that target existing markets. There is typically limited information available for start-ups creating new markets.

The first step is to find the relevant reports and analyze them to learn something about your idea.

We can distinguish two directions of research. In "market status" analysis, you look at:

- Current competitors: to identify how your idea relates to current solutions and alternatives that are already available in the market.

- User behaviors: How often users have to use a product similar to your idea and in which circumstances

- User opinions: How customers experience the use of competitor's products or how they experience a problem

- Current technology: Benchmark technology to understand what kind of standards have been set regarding speed, accessibility, etc.

Identifying relevant reports will give you an overview of the current market and available solutions.

In "trend research" you look at:

- User behaviors: What new behaviors are emerging?

- Technology: What upcoming technology may disrupt the market and our own idea?

This analysis helps to derive your idea’s possible evolution, avoid pitfalls, or even give new ideas.

How to get started with desk research

You must be specific to get information relevant to your particular question, i.e., your idea/product’s current status. For instance, if you want to launch a service that enforces privacy when publishing pictures on the Internet, look beyond the population of people that publish photos on the Internet (which is very large). Figure out who is interested in the privacy and takes it seriously; this is not necessarily just a subset of the first population. There may be people who currently do not publish any photos out of concern for privacy issues.

Where to find helpful information:

- Google or other internet search engines

- Social media platforms (user behavior and opinions)

- Publications relevant to your target market

- Libraries

- Professional associations

- Business groups

- Professional fairs

- Governmental organizations

- Public and nonprofit organizations that generate a lot of data (hospitals, transportation systems, etc.)

(Inspired by The Real Startup Book)

Picnic in the Graveyard experiment

Learn experiment.

With the picnic in the graveyard experiment you are interviewing founders or team members from failed startups, to learn what they learned in their journey, what they did wrong, and why they decided to stop. The most valuable lessons are learned from people who have tried before and failed. Do they have any lessons that you can use to prevent failure? This technique involves exploring "near miss" failures similar to your product idea, in order to generate ideas about what to test and how to build a business model that could work this time.

The experiment is used to answer

- What related products have been created (and failed) in the past?

- What did customers like/not like about previously created products?

- What advice would founders of failed products similar to your idea (near misses) give you, if they were to go after the idea again?

- Which features have to be included in the product?

- How is this product different from similar products offered in the past?

The Picnic in the Graveyard experiment explained

Sean Murphy described it best: “Do research on what’s been tried and failed. Many near misses have two out of three values in a feature set combination correct (some just have too many features and it’s less a matter of changing features than deleting a few). If you are going to introduce something that’s “been tried before” be clear in your own mind what’s different about it and why it will make a difference to your customer.”

The goal of this technique is to identify a unique way to angle a product, so that you avoid a previously committed error. You will be introducing the idea at a later time, which will be to your benefit; however, it’s likely that previous failures will have useful feedback for you.

With this technique, you are aiming to construct a fuller picture of what’s been tried in the past, in order to identify potential landmines. This helps navigate a similar space, but with the benefit of the previous founders’ experience.

How to get started

- Perform some desk research (Google, etc.) to identify previous attempts at entering a similar market with a similar product to your product/product idea. Depending on the idea type, you may also find it useful to ask your local librarian if they can help you find relevant case studies. The research you do here should take one or two days maximum.

- Use social media platforms to seek out customers of these previous companies. Look for “Likes” on Facebook of the product/company name. Look for old tweets mentioning the company, etc.

- Reach out and contact these previous customers, and interview them. Find out what they liked and disliked about the previous company.

- Try to find the founders or project/product leads if at a big company, and interview them about their experiences.

You can conduct the interviews with the previous customers and founders in a similar fashion as is done when conducting customer discovery interviews.

(Inspired by The Real Startup Book)

[convertkit form=5116887]

Stage 2: Experiments to validate your solution

Once you validated that there is an actual problem, it is wise to first test the interest in a solution, before running off and building a solution. We usually do another round of customer interviews first, but after that, there are plenty of alternatives:

Landing Page experiment

Confirm experiment.

A landing page is ideal to test your idea of your solution and to validate interest from your customer. There are hundreds of ready-made templates available and lots of services to just easily create a landing page if even a template is too technical for you.

What is important when using a landing page is that you ask for some kind of currency. An email address or signup is enough, but people have to ‘pay’ you something to really show their interest. Visitors and pageviews are not enough.

This experiment is used to answer

- Is the definition of your customer segment correct?

- Is the way you present your solution to your customer segment attractive enough for them?

- In what ways can you attract new customers within your customer segment?

- Is the customer interested enough in your solution to leave their contact details or make a purchase?

How to get started

- Create a landing page on Cardd.co (for example)

- Try to clearly express your product (value proposition) on the landing page. Do this both visually and textually.

- Decide if your customer can leave their contact details on the page or will be able to make a Dry Wallet order.

- If your customer is making a fake order, make sure to set up some kind of explanation (after they did) which thanks them for attempting to do so and informing them that this is not yet possible.

- If possible, contact your customer afterward to understand more about their impressions of your proposal on the landing page.

Explainer Video experiment

Confirm experiment.

Dropbox got famous with their explainer video MVP. When your product is too complex to easily explain or show a prototype, an explainer video might be ideal. It was hard for the dropbox guys to show the magic of Dropbox before Dropbox existed. With an explainer video and again asking for some kind of currency, they were able to validate the interest in Dropbox. They got overwhelmed with beta signups.

Creating an explainer video allows you to describe the proposition of your solution clearly. It can be placed on a Landing Page or shared through different channels (e.g., via social media or email).

The experiment is used to answer

- How well does your customer segment understand the proposition of your solution?

- Does the audience find the value proposition compelling?

- Is the product buzzworthy, and does the target audience want to share it with their friends/colleagues?

- What channels are the most responsive?

How to get started

- Define success criteria and what metric you want to test with the use of your video.

- Make sure you’re able to measure whatever you want to focus on (e.g., if you’re going to measure click through rates, you’ll need to link from the video to a page where traffic is measured).

- Create an outline for the story you want to tell about your product. Ideally, your story should also connect with customers on an emotional level.

- Create a (marketing) plan where you’re going to share your video and how you’re going to reach a decently sized audience. Also consider if your video is part of something else (e.g., a landing page) or should be shared independently.

- Create and publish your explainer video as you planned in the previous steps

- Evaluate the results of your pre-defined metrics. Did your video reach enough people? Did it convey the right message? Did customers make positive interactions afterward? Why (or why not) was this the case?

If possible, contact customers afterward (if you know who they are) and ask them about their impressions of your video and product.

(Inspired by The Real Startup Book)

Competitor usability testing experiment

Learn experiment.

Competitor usability testing is observing your customers use a competitor’s products or services to gain insights on the mindset of the user, common issues, and potential improvements in your own product. It does not have to be a competitor, but could also be an alternative solution. Is your customer currently solving their problem with Excel, ask her to walk you through her way of working so you can learn what she is already doing.

The experiment is used to answer

- What job is your customer trying to get done?

- What is the minimum feature set to solve the problem?

- Why isn’t the use of a competitor’s product good enough to solve your customer’s problem (or is it)?

- What do you want to do differently from your competitors?

- What do you want to copy from your competitors?

How to get started

- Find products of your competitors that you can use for this experiment.

- Let your customer clearly express beforehand to you what they’re hoping to achieve from using the products (what they’re trying to get done)

- Ask your customer to use the competitors’ solutions and talk you through their thought process while using them.

- Observe your customers while they use the competitors’ products. Try to talk/intervene as little as possible. Focus on your customer’s impressions, intentions, expectations, and emotions.

- Evaluate with your customer what they liked and didn’t like about the competitors’ products. Ask them whether these products would fully help them achieve what they want (which they expressed beforehand) and, if not, what additional features they might require.

If necessary, follow up with additional questions that help you learn what your solution should look like.

(Inspired by The Real Startup Book)

Experiment Solution Interview

Learn and Confirm experiment.

In customer solution interviews, you propose your solution to gather feedback from potential customers and see whether you might convince them to start using your solution.

The solution interview is used to answer

- Does the customer see value in the product's value proposition (if not, why)?

- What do you need to explain further about the solution?

- What objections do customers have?

- What do you need to improve about (the concept of) your solution?

- Will a customer offer some form of commitment to try your solution?

How to get started

- Prepare a pitch that takes a few minutes at most, in which you describe clearly and concisely what your solution is, what it offers, and how it works. Consider creating some sort of visual or prototype which conveys this message further.

- Prepare a topic map with which you can ask questions after your pitch. Make sure that your questions won't be suggestive or leading. You’re generally looking for answers how your customer perceives your product, how they might use it in their daily activities and if they understood everything correctly.

- Before starting your pitch, make sure you’re talking to the right person. Do they have the correct function within their organization, are they aware of their problem, and do they match your found JTBD? If you’re sure you’re talking to the right person, consider not continuing the interview, as it would lead to useless data or useless commitments.

- This experiment aims not to sell to the customer but to learn whether you can convince a customer based on your current proposal. If that isn’t the case, zoom in on what objections or doubts they have after giving your pitch. You can start doing this with your prepared topic map.

If your customer isn’t enthusiastic about your solution, the goal is to learn why that is the case and what you might need to reconsider about your solution (or customer segment). Don’t keep pitching to try to convince the customer, but search for the why instead.

If your customer expresses enthusiasm, consider Asking for commitment.

Ask for a Commitment experiment

Confirm experiment.

Asking for commitment shows you whether your customer is just friendly when they express enthusiasm about your solution, or if they’re serious about using it. It’s a great way to validate a customer's interest until you can sell your solution.

Many start-ups fail because they believe their customer wants to buy their product, but then, later on, nobody is actually buying their solution. It's very easy for someone to tell you that they're enthusiastic about your solution and that you should contact them when they can buy it. However, you will find that these are empty words in most cases and that they rarely lead to an actual purchase.

If you can't be sure that a customer would buy your solution, even if they tell you that they would, how can you find validation that your solution is good enough to keep working on? At this stage, you're probably not at a point where you can immediately sell your solution, but you would still like to find some form of validation that your customer is serious about using your solution.

This is where 'commitment' comes into play. Commitment can be described as your customer offering you something to show that they're serious about you and your solution.

We recognize three types of commitments: time, reputation, and financial commitment.

A time commitment could include:

- A clear next meeting with known goals

- Sitting down to give feedback on prototypes

- Using a demo/trial of the product for a non-trivial period

Reputation risk commitments might be:

- An introduction to peers or team

- An introduction to a decision-maker (important stakeholders)

- Giving a public testimonial or case study

Financial commitments are easier to imagine and include:

- Letter of intent (non-legal but gentlemanly agreement to purchase)

- Pre-order

- Deposit

At this stage, every interview/pitch should end with a clearly formulated commitment or the realization that the person you're talking to isn't going to use your (current) solution.

Please note that, as time goes on, you need to progress from time to reputation commitments to financial commitments. You can only be sure that your customer will buy your product when you have a financial commitment in the pocket.

You might be anxious to ask your customers about commitments (if they're not offering any themselves) but doing so will immediately show you how serious they are about your solution. This will give you an indication of whether you are on the right track or not.

If your customer does not want to give any form of commitment, try to find out why. Based on this, try to take away their objections or decide that they’re not going to be your customer.

(Derived from The Mom Test by Rob Fitzpatrick)

Start with prototype experiments

Confirm experiment.

When you ask a developer to build your first version, it often takes months to finish. The right frameworks need to be used, the right choices to be made so you don’t have to refactor in the future. But when you ask a developer for a prototype or proof-of-concept, it can often be done in two weeks. We love hacking something together, especially in the early stages. I’m 100% sure that the code you write in the first few years will be completely rewritten later on, even if you put a lot of time and effort into your lines.

Get that prototype hacked together with as few resources and in as little time as possible to test your solution. Only when your customers and users try your product, you really learn what they want. If it doesn’t scale, it is often a good experiment. Make use of the Concierge MVP or Wizard of Oz (explained later in this blog post) to remove hard to build components, use web services to outsource everything that is not your core business, and get that prototype out in two weeks.

Paper prototyping experiment

The Paper prototype is a way of quickly testing your solution by using paper drawings, flyers, or even PowerPoint presentations. Mimicking user interaction or the process of the solution to learn if this is what the customer is looking for. Does this solve their problem?

The experiment is used to answer

- At which point(s) does your solution not function as expected?

- Is your solution intuitive for your customer to use and navigate?

- What form should your solution take?

- What (extra) information do you need to give to your customers?

How to get started

- Create a simple mockup on paper for each step in the interaction that you are testing. Try to imitate on paper what your customer would see when they use your solution. If you are creating a digital solution, every screen your customer goes through should have a mockup on paper.

- Ask someone to interact with your paper prototype. Ideally, this is someone in your customer segment, but it should at least be someone who wasn’t involved in creating your mockups. You can also do this digitally by presenting your drawings in a Powerpoint.

- Let someone in your team play the role of your solution.

- Ask your customer to interact with your paper prototype as if it is an actual application. They can physically interact with your product by pressing on or moving the paper around. Ask them to think aloud so that you can understand their thought process.

- The person who plays the role of your solution removes and adds papers based on your customer’s interactions. While doing so, they should try to talk as little as possible.

- Have someone observe and take notes of your customer’s interactions. If necessary, follow-up with questions to learn more about their experience when your customer is done interacting with the product.

- If necessary, create new mock-ups to modify interaction flows and layouts to continue testing.

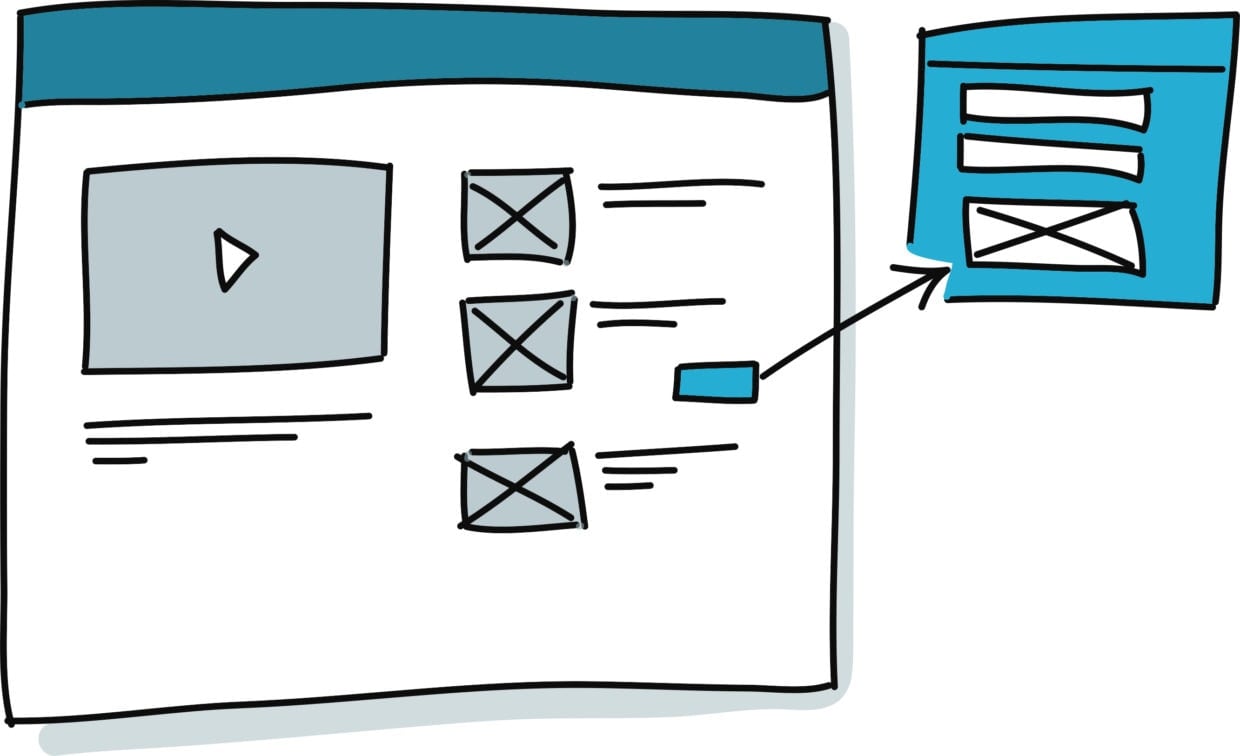

Clickable prototyping experiment

Learn experiment.

Like paper prototyping, clickable prototyping allows you to develop and evaluate how the design of your solution should be. Instead of using paper, you create an online environment with which your customer can interact.

The experiment is used to answer

- At which point(s) does your solution not function as expected?

- Is your solution intuitive for your customer to use/navigate?

- Are there points wherey our customer requires intervention/extra help?

- What (extra) information do you need to give to your customers?

How to get started

- Create an online environment in which your customer interacts with your product by clicking through it. You can use tools like Adobe XD. Note that this experiment is only here to test basic interactions of your customer; you shouldn’t attempt to build a fully functioning product yet.

- If you are uncertain about what interactions your customer might make, start testing with a Paper prototype, as creating a clickable prototype takes significantly longer.

- Ask your customer to interact with your clickable prototype. Ask them to think aloud so that you can understand their thought process.

- Observe your customer’s interactions. You can do this by sitting next to them or by sharing screens through video calling.

- Try not to explain/or intervene once your customer has started interacting with your prototype.

- If necessary, follow-up with questions to learn more about their experience when your customer is done interacting with your product.

- If necessary (if your customer got stuck or something went wrong), modify your clickable prototype and test again.

Fake button / Smokescreens experiments

Confirm experiment.

Instead of building a feature and then see if people are actually interested, why not add a button to your product and see how many people click? When we first wanted to validate if the users of our portfolio company Study Credits were interested in different skins for their school agenda, we added a simple link saying ‘Change skin‘. We linked the event to Mixpanel and displayed a page after a click on the link explaining that we were testing a new feature. One week later we had our answer: A lot of teens wanted to change their skin. Time for the next experiment: See if and how much they were willing to pay.

Last week I spoke with a startup that is building the next-generation newsreader. They put 6 weeks in developing a ‘breaking news’ section, from design and UX to real-time push notifications, only to discover that the feeds they were serving never offered any breaking news and their users didn’t want breaking news from this newsreader. Their users rather used CNN or equivalents for that. They could have prevented six weeks of work by adding a fake opt-in, a Wizard of Oz model sending push notifications, or by first interviewing their users.

Concierge Model testing

Learn experiment.

In a Concierge Model test, you simulate product features without using technology. All interactions are performed manually by humans. Your customer is aware of your manual interactions, leading to a higher perception of value than the real solution. You can use the Concierge Model to quickly learn if your solution is solving your customer’s problem and what brings the most value.

A great practical example of a Concierge test is, when Peerby wanted to test their rental model called Peerby Go, they did not build a whole new marketplace and rental system. It was a simple landing page where it was possible to type in what you wanted to rent and request it. That request was emailed to one of the employees and that employee would pick up the phone, find the item either in their own peer to peer Peerby system or somewhere in a rental shop, negotiate a price, drive to the location to pick the item up, and bring it to the customer. It’s like having your own… concierge!

The experiment is used to answer

- What interactions are required to solve your customer’s problem?

- Does your value proposition match the customer’s expectations?

- Is the customer segment willing to pay for your solution?

- What hurdles (still) exist in delivering a solution to your customer?

How to get started

- Write a description of your solution, and its value proposition, down in such a way that it is clearly expressed to your customer segment.

- Design a service, matching your value proposition, in which you manually interact with your customer to solve their problem.

- Talk to your customer segment (early adopters) and offer them your service (in exchange for payment).

- Gather data about interactions that need to be made and customer satisfaction while delivering the service.

- Afterwards, evaluate with your customers whether your service delivered everything they expected, and if they would keep paying you in the future to use your solution.

Note that because the customer knows someone is taking personal care of the job, the value proposition is significantly higher than the automated product you will probably build to scale.

Wizard of Oz experiment

Confirm experiment.

The Wizard of Oz model is comparable to the Concierge model. You use manual labor (your own hands, an intern, Mechanical Turk) to ‘automate’ tasks in your backend that are for now too costly to build (in time or resources). For the customer, it seems to happen automatically, but you are actually doing it by hand. The difference with the concierge model is that the customer does not know your Wizard of Oz test is done manually, while with the concierge model the customer is aware of the fact that someone is personally taking care of their needs.

We devoted an entire blog on the difference between The Wizard of Oz model and the Concierge Model that you can read here.

The experiment is used to answer

- What interactions does your customer need to make with your product?

- Do the features that you came up with help solve your customer's problem?

- Does your product create value for your customers?

- Is your product a must-have/must-use for your customer?

- Are customers willing to pay for your solution?

How to get started

- Build a prototype of your product that your customer can interact with without using complex technology.

- Dedicate at least one person to simulating the (technological) behaviour of your product without your customer being aware of them.

- Let your customers interact with your prototype and observe their interactions.

- If possible, use follow-up questions to learn about their perception of your product after your customer is done interacting with your product.

- If your customer is enthusiastic, consider offering the service for a longer period, in exchange for payment.

[convertkit form=5116887]

Stage 3: Experiments to validate payment

Pre-order experiment

Confirm experiment.

Kickstart is the ideal example of any type of pre-orders. Most used for hardware, books, and music, the pre-order is perfect when the product is too hard to build as a simple version. When writing a book, it is easy to start with a landing page and give away one or two chapters after the visitor pays with their email address. After that, a pre-order works great to see how easy it is to sell a certain amount of books.

Dry Wallet experiment

Confirm experiment.

In the fake button example, we wrote about the Study Credits test. We wanted to see how many people were interested in another skin for their school agenda. After validating the demand for that feature, we wanted to know how much they were willing to pay. We created a simple screen with 5 screenshots of different skins and below each screenshot was a buy button with a price. Instead of building the whole payment system, we again showed a message explaining that we were testing a feature after the user would click the buy button. We added 3 different prices for different skins to see if the price was of importance. We tracked the clicks with Mixpanel and two weeks later we had learned that probably not enough people were willing to buy skins to make it a viable revenue model. We could have spent a month building it to discover that it would not generate enough revenue, now we learned the same after a day’s work!

In a previous blog, I wrote how to execute the Dry Wallet and Price Elasticity Survey experiments.

Stage 4: Experiments for scaling

You might have heard of Growth Hacking before. It is a popular term to explain the type of marketing start-ups

do. It basically is experiment-driven marketing. So yes, you will run more experiments to create traction!

In comparison to previous stages, when Growth Hacking, we do not have pre-defined experiments since you are

figuring out which channels work, what communication works best, and how to convince your potential customers

to sign up. There are several areas, though, that you can try to get more leads to your solution.

Traffic Generation

Traffic generation is all about generating as much traffic to your solution’s website as possible.

There are several ways to create more traffic.

You could, for example, insert yourself into discussions that your customers are having online and share your insights. As CEO of your idea, you have gathered many learnings about your solution and market that you can share and use as a trigger for people to learn more about you.

Creating content from that knowledge can also be done in the form of blog posts or articles on your website. Google

and other search engines index these articles, and when people search for these topics, they will end up on your website and hopefully convert. You can then use these articles to share on social media platforms or put them into newsletters for potential new customers.

Podcasts are another great way to create content. Invite current and future customers to be your guest and interview them about their challenges and how they solved them.

It’s a great way to attract prospective customers, and since you are the one hosting the podcast, it also shows your expertise on the topic.

Even if you have a small number of listeners, you can create new customers if they are in your customer segment.

Email marketing

Email marketing is great to keep your current and future customers in the loop. It is often easier for your customer

to sign up for a newsletter than for your solution. But once signed up, you can bombard them with interesting content

showing that you know everything about their problems and how to solve them—hopefully converting them into

customers along the way.

Advertising

Last but not least we have advertising to boost the Traffic Generation and Email Marketing. There are several ways

to set up advertising in a cost-efficient way, for example, by using Google Ads to advertise on important keywords.

Or on LinkedIn or Facebook to insert your solution into the timelines of your customers.

Try to steer away from large and expensive marketing campaigns for now. It is much easier to run experiments on platforms like Google, LinkedIn, and Facebook, plus they offer you great tools to measure the outcomes.

You are still learning how to create traction and are probably not ready to spend tons of money on paper or tv ads.

Get in debt on growth hacking

Growth Hacking is a topic that is too big to dive into deeply within this blog. Luckily there are great resources

online that you can use to learn all about Growth Hacking and online marketing. Our favorite is ConversionXL, specifically their Growth Marketing Mini Degree, which you can find at: https://bit.ly/2PsvCCp. They offer a 7-day trial for 1 euro in which you can already get in-debt on growth hacking techniques.

So what is next?

By reading this guide and applying its content to your startup, you have learned the importance of understanding the problem you are solving and for whom you are solving it. You explored different ways of testing potential solutions before building them. You tested whether your customers are willing to pay and how to create a business case. Finally, you dipped your toes in scaling your solution to an ever-growing market.

Now it is time to get out there and put all this information into practice! Because getting out there and just doing is by far the most important lesson.

Timan Rebel has over 20 years of experience as a startup founder and helps both independent and corporate startups find product/market fit. He has coached over 250+ startups in the past 12 years and is an expert in Lean Innovation and experiment design.